Ample literature has gone into what teachers should do on the first day of class. Should they do an ice-breaker? Dive right into notes? Review a few example questions to motivate the course?

I don’t really have control groups to use as a comparison, but I think these two activities were helpful and engaging, and I figured it was worth passing along.

Introduction to Statistics (Intro level, undergrad)

I stole this one from Gelman and Glickman‘s “Demonstrations for Introductory Probabiity and Statistics.”

When the students come in, I split the course (appx 25 students) into eight groups. Each group was given a sheet of paper with a picture on it, and the groups were tasked with identifying the age of the subject in question. I had some fun coming up with the pictures – I went back to the 90’s with T-boz from TLC and Javy Lopez of the Atlanta Braves, added an impossibly 52 years-of-age Sheryl Crow, and, my personal favorite, Flo from the Progressive commercial.

How old do you think Flo is?

Anyways, taking approximately one minute with each picture, the groups, without knowing about it, started talking confidence intervals (“No way she’s not between 40 and 50”) and point estimates (“My best guess is 45”). That was good. At the end, I collected the pictures, and revealed the ages.

Next, using one picture as an example, we made a table of some of the class guesses, and went through and calculated estimated errors for each group. This led to the obvious discussion of what metric would be useful for comparing group accuracy. For example, taking the average error would be problematic because the negative’s and positive’s would cancel out. The class settled on mean absolute error, but we also discussed mean squared error, and some form of a relative error, which would account for the fact that there might be more error using older subjects.

If you were wondering, most groups had an average absolute error of about 5 or 6 years (the winning group was less than 3), and Flo is 44 years old.

Probability and Statistics (upper level)

Rocks-paper-scissors (RPS) is one of my favorite games, and so I split the class up into pairs to play a best-of-20 RPS series (approximately n = 25 students). After each throw, each student was responsible for writing down their choice during that turn (R, P, or H). At the end of the series, we now had a set of n sequences, with each element of the sequence drawn from the letters ‘R’, ‘P’, and ‘H.’

Next, we did some quick analysis of these sequences. While there were several metrics possibly of interest, I focused on the longest sequence of a consecutive throw. For example, if the sequence went:

R, R, P, P, P, H, P, S

the longest sequence would have been three (3 consecutive papers).

Next, we compared the distribution of the class to what would have occurred had the throws been randomly chosen. This was easy to do using R/Rstudio.

set.seed(100000)

omega<-c("Rocks","Paper","Scissors")

x<-sample(omega,20,replace=TRUE)

x

rle(x)

max(rle(x)$lengths)

maxSeq<-NULL

for (i in 1:10000){

maxSeq[i]<-max(rle(sample(omega,20,replace=TRUE))$lengths)

}

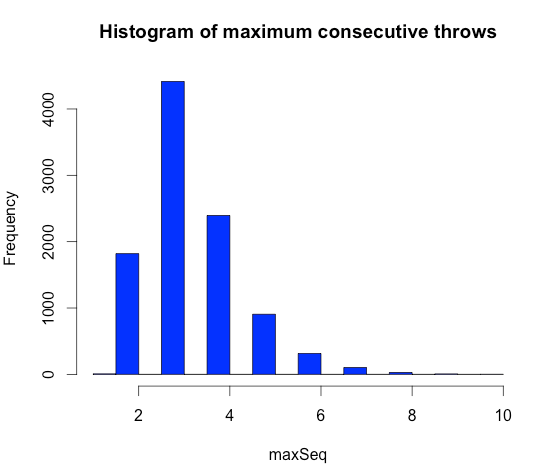

hist(maxSeq,col="blue",main="Histogram of maximum consecutive throws")

table(maxSeq)/10000

And here’s the resulting histogram, which represents the frequency of maximum consecutive throws in 10,000 randomly drawn sequences of 20 RPS throws.

The mode of the histogram is 3, and the class was quick to pick up on the fact that, if throws were randomly drawn, we would have expected more maximum’s of 4 than 2. Of course, in our class of about 25 students, there were many more 2’s than 4’s. Such evidence is not surprising, however, given that human nature tends to to underestimate the true randomness of numbers (for examples, Wiki has a few). This helps to confirm it, and also gives students the chance to meet another, learn about Monte Carlo techniques, and gain a quick introduction to R/RStudio, all while playing Rocks-Paper-Scissors. I also ended by showing the class this New York Times RPS game, in which you can play the computer, either on ‘novice’ or ‘expert’ mode.

Obviously, more went into the courses after these two activities, but I think I’ll go back to them in the future. It was certainly more fun than starting with the syllabus.

Reblogged this on Stats in the Wild.

Reblogged this on chrisbeeley.

Thanks for sharing this exercise – I borrowed it last night for opening night of my MBA Quantitative Analysis class and it went over extremely well. A lot of good discussion and teaching points came from it.

Since it was an MBA course and many students had exposure to estimates and confidence intervals, I asked each group to provide both an age estimate and a 95% confidence interval for the age of each subject. Among the class, 37.5% of the true ages fell outside of the students 95% confidence intervals. So, while trying to create 95% confidence intervals, they actually created 62.5% confidence intervals. This led to an interesting discussion about overconfidence in estimation.

Hi Kyle,

That’s awesome. Really good idea to use confidence intervals – I should come back to that idea now!

If you haven’t seen this activity from the Open Intro group, I really like it for teaching about confidence levels. It goes through possible linear associations, and almost always, my class’ expectations lineup with about 95% confidence.

https://www.openintro.org/stat/why05.php?stat_book=os

Take care,

Mike