While Nate Silver’s FiveThirtyEight website still has some work to do to meet its high expectations, the site gave the World Cup a pretty strong effort. This included in-depth features on important players, comparisons of numbers from this year’s tournament to past ones, and lots of work regarding the United States’ run into the knockout round.

Excluding the “Weekly round-up” posts, the site posted a pretty remarkable 88 World Cup articles in the past 35 days, or roughly 2.5 per day.

In any case, one of the most popular features of 538 over the past several weeks has been its game picks, in which Nate’s statistical model generates game outcome probabilities. For the group stage, this entailed three-way probabilities (allowing for the possibility of ties), and for the knockout stage, 538’s model gave a probability for each team advancing.

Given the model’s fascination with Brazil, and the home team’s surprise drubbing at the hands of Germany, these picks became a sounding board for readers to criticize 538 and its position as an analytics leader. If you are interested in this reaction, Phil posted some really good tweets here, and I really liked some of the comments here, including someone who wrote “Econometrician here. His model is a fiecking disgrace.”

In any case, while I don’t have too much time to delve deep into his picks, you’ll be happy to know that Nate Silver’s numbers were far from a disgrace. To be specific, they were more accurate than, on average, how 19 out of 20 people would have done due to chance. You can read more about my methodology for claiming this here.

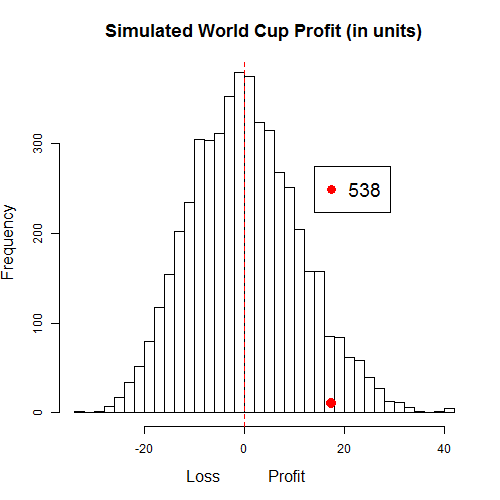

I ran numbers on the entire tournament (excluding the third-place game), and Nate’s picks finished in the 94th percentile of simulations, using the bets and lines suggested by Seth Burn on StatsBomb. Here’s a histogram of simulated profit, with a point that depicts 538’s observed estimated profit of 17.4 units.

Of course, this success could have been due to chance, in which case Nate was just luckier that about 19 out of 20 people.

Here’s a breakdown of 538’s results (W-L record), given the game-probability implied by Seth’s lines.

On picks when 538 advocated picking a team that sportsbooks listed as between a 50 and 70% favorite, the site finished a remarkable 17-4. This included the pick of Germany over Argentina in the Cup final.

Because these aren’t the actual probabilities shown on the 538 website, we aren’t looking for performances within the probability range in this chart. For example, if Nate’s model recommended a team with 65% probability, but sportsbooks only had that team at 55%, the game result would show up in the table in the ’50-60′ row.

In any case, this table actually gives me more confidence in 538’s model than I originally expected. 538’s success in picking the 2014 World Cup wasn’t just due to a couple of long-shots hitting it big; instead, it was a success in picking nearly all types of teams.

That is, of course, except for the one from Brazil.

Here’s the R-code that I used, and thanks for reading.

probs<-c(0.590, 0.568,0.489,0.198,

0.636,0.687,0.435,0.345,0.199,

0.125,0.185,0.530,0.624,0.641,

0.778,0.523,0.581,0.521,0.081,

0.390,0.327,0.740,0.649,0.744,

0.471,0.514,0.347,0.514,0.854,

0.349,0.320,0.441,0.693,0.492,

0.483,0.248,0.129,0.394,0.192,

0.355, 0.486, 0.520, 0.588, 0.187,

.370, 0.385,.284,.742,0.474,

0.600,0.507,0.490,0.280,0.248,

0.282,0.379,0.342,0.102,0.530,

0.253,.172,0.668,0.747)

outcomes<-c(1,1 ,0,0,

1,1,0,0,0,

0,0,1,1,1,

1,1,1,0,0,

1,0,1,1,0,

1,1,1,0,1,

0,0,1,0,0,

1,1,0,0,0,

1,1,1,1,0,

1,0,0,0,1,

1,1,1,0,0,

0,1,0,1,1,

0,1,1,1)

mean(outcomes)

n<-length(probs)

mat<-data.frame(probs,outcomes)

mat$net<--1

mat[mat$outcomes==1,]$net<-1/mat[mat$outcomes==1,]$probs-1

colSums(mat)[3]

#Observed net 17.969 (through 24 games)

#Observed net 15.91 (through 7/8)

B<-5000

Output<-matrix(nrow=B,ncol=1)

for (i in 1:B){

mat1<-mat

mat1$SimOut<-rbinom(nrow(mat1),1,mat1$probs)

mat1$netsim<--1

mat1[mat1$SimOut==1,]$netsim<-1/mat1[mat1$SimOut==1,]$probs-1

Output[i,1]<- sum(mat1$netsim)

}

Output

png("C:/Users/PubHealthGuest/Documents/Posts/NSII.png",height=500,width=500)

hist(Output,breaks=40,cex.lab=1.3,cex.main=1.5,

main="Simulated World Cup Profit (in units)",

xlab="Loss Profit ")

points(colSums(mat)[3],10,pch=16,col="red",cex=1.9)

legend(14,275,"538",pch=16,cex=1.6,col="red")

abline(v=0,lty=2,col="red")

dev.off()

mean(Output>colSums(mat)[3])

I love that you did this for Silver’s projections. I have been reading Seth’s column, and I was pondering this very thing.

P.S. R is pretty much the best, amirite?!

Hi Matthias,

I just saw this! Sorry for not responding sooner, as generally the only comments I get are spam.

Glad you enjoyed the post – it seems many people were curious about Silver’s projections. Wish I had a larger sample size with which to work, both for more accurate judgements of his model and because that would mean more world cup games!

-Mike

Yeah, I’d be curious to compare the 538 probabilities of a win with the actual outcomes over a long period of time. I’m not sure they’re available after the fact, though.